Shift change—an operator looks at her screen which logs her in through facial recognition and reads off a new set of instructions. Once she finishes reading the instructions, a camera facing her senses that she has completed the page and switches the screen to show a video feed from the machine’s forward-facing camera. The screen shows a pathway, superimposed over the camera feed, along the warehouse floor along which she pilots her machine. As she approaches a set of tall wide shelves, at the end of an alleyway of tall wide shelves, the screen highlights in bright green a pallet high on the third shelf; the superimposed pathway finishes right in front of the pallet with a small square to indicate it is the object she is to retrieve. As she reaches the square, the screen switches to a camera feed at the top of the machine and focuses on the highlighted load. She activates her lift forks and sees guidance lines showing when her tool tips are in line with the pallet 15 ft. (4.6 m) above her head; when in line with the pallet, the machine’s tool control self-triggers stop.

Scenes like this have played out in countless science fiction books and movies including the opening of “Aliens” 34 years ago. Although that film is set more than 150 years from now, the future it predicted—at least as far as material handling and ADAS (Advanced Driver Assistance Systems) are concerned—is here already.

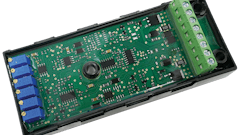

Inclusion of advanced electronics in platforms for machine intelligence can aid with the creation of smart systems which help operators more efficiently complete their tasks like in the scenario described above. CrossControl’s newly launched CCpilot V700, for instance, features at its heart an iMX8 based ARM application processor. This presents the very latest generation NXP processor, which is significantly more powerful than the previous generation iMX6 chipsets. The version of the iMX8 used by CrossControl in the V700 has three times the graphical performance of an iMX6 based display. This increased performance paired with expanded API and software support provides system adopters the tools needed to unlock and deliver the next step in operator support tools without requiring expensive bespoke systems.

One of the key newly supported computer APIs is Vulkan. Vulkan provides high efficiency access to the onboard GPU allowing for a better distribution of resources. This allows a system to run more applications, faster and at lower temperatures, for greater performance gains. In addition, Vulkan support is needed to make full use of the completely redesigned graphics backend of Qt 6 which is expected at the end of this year. Qt has long been one of the best in class tool chains for creating graphical user interfaces on embedded devices.

The iMX8 family of application processors are set to be the flagship for NXP for the next 10 years, ensuring a decade of support and software enhancements over that time. This means that any device using this core can support a new or in development machine for its entire life cycle. Out of the gate the chipset includes increased hardware accelerated video codec support including not only H264 but also H265 (up to 4k resolution and 60 frames per second), allowing developers greater design flexibility in how to incorporate video into their applications. It also allows Gstreamer—an open source multimedia framework for creating media applications—to offer significantly smoother playback with lower resource requirements.

The iMX8 used in the V700 has three times the graphical performance as previous versions.CrossControl

The iMX8 used in the V700 has three times the graphical performance as previous versions.CrossControl

Cameras add to smart machine capabilities

With the development of Ethernet cameras, complex camera systems can be deployed in vehicles to provide enhanced vision solutions. The Ethernet cameras can be paired with more advanced compute units to achieve an even greater impact on a machine’s day-to-day tasks. For example, larger machines that deploy multiple cameras can make use of more advanced displays to render all camera streams in a single unit. This approach takes advantage of the ease of connecting several Ethernet cameras to a single system. Operators can swipe between feeds, arrange multiple feeds into their preferred grid, load grid pre-sets to suit specific, common tasks and even intuitively control the feeds with touch-based pan, rotate and zoom functions to support more focused tasks which require precision and accuracy.

The Stoneridge-Orlaco EMOS cameras, available with viewing angles from 30-180 degrees, is one such Ethernet camera capable of being combined with other components to create advanced vision systems.

Integration of EMOS cameras with advanced displays like the CCpilot V700 can take advantage of machine learning derived solutions for deploying productivity aids. CrossControl engineers have been working at integrating and optimizing these solutions into the company’s systems over the last few years. What once required the most powerful systems to be functional can now make use of later generation chipsets and modern architecture to achieve the same results. The resulting machine learning-based ADAS can help to increase the productivity of machine operators and reduce the learning curve for new employees.

Development of Ethernet cameras has enabled complex camera systems to be deployed in vehicles to provide enhanced vision solutions.Stoneridge-Orlaco

Development of Ethernet cameras has enabled complex camera systems to be deployed in vehicles to provide enhanced vision solutions.Stoneridge-Orlaco

The feed from forward facing EMOS cameras can be shown on the CCpilot display with an overlay showing guidance lines. This helps less experienced operators follow an optimized driving course which guides the machine so as to avoid hazards or easily navigate complex routes. The system can even include hazard warnings to identify people or obstacles in the machine’s path by incorporating additional algorithms used to identify potential dangers.

While very experienced operators may be able to align their tools perfectly on the first attempt or dig a hole to a precise depth and circumference, the majority of vehicle operators rely on some form of external checks. With the correct implementation of exterior cameras, it is possible to even control the tools themselves, particularly useful when an implement is in use outside of an operator’s field of view, as is frequently the case with lift trucks and cranes. Knowing how and where to maneuver a machine from years of practice is a rare skill whereas knowing because the camera is providing the perfect viewpoint is a skill easy to acquire.

READ MORE: Mirrorless Vehicles are Closer Than They Appear

While the majority of EMOS cameras are deployed outside the cabin, an increasing number of manufacturers are including interior cameras as part of a complete system. This allows the platform to make use of the interior camera for safety and security. Much like premium mobile phones and laptops now using some form of facial recognition, these in-cabin cameras can allow the device to utilize facial recognition as part of the vehicle’s normal start sequence, ensuring only authorized personnel can operate a given vehicle. Once an operator has passed this security check, a positive ID can also allow the machine to automatically set itself up for that user’s work schedule and personal preferences.

While in most cases it is not necessary to display the feed from an interior camera, the feed can still be used throughout the work day, both to aid productivity by automatically moving to the next screen when information has been consumed—allowing operators to keep their hands at the controls for longer—and to improve overall safety by monitoring operators for fatigue or lack of focus as the machine learning AI (artificial intelligence) system can be trained to monitor and analyze eye movement and, when necessary, send a warning if it detects that the driver is tired or not paying sufficient attention to the task at hand.

By taking advantage of these new tools, system developers can allow their machines to see more than ever before. Use of the information collected by these tools enables the creation of smarter machines which can increase productivity from the warehouse to the mountainside.

The Stoneridge-Orlaco EMOS cameras are available with viewing angles from 30-180 degrees.Stoneridge-Orlaco

The Stoneridge-Orlaco EMOS cameras are available with viewing angles from 30-180 degrees.Stoneridge-Orlaco